How did radiation therapy start?

In late 1895, a German physicist named Wilhelm Conrad Roentgen noticed an unusual glow on a fluorescent screen while experimenting with cathode rays in his laboratory. This observation, which he documented shortly after, revealed a new form of energy capable of passing through opaque materials. He called them X-rays, unaware that his accidental finding would ignite a global race to harness this invisible energy for medical healing. [2] Within weeks, the potential for these rays to visualize the human skeleton became clear, but doctors quickly realized that the same energy capable of peering through skin might also affect the tissues it touched. [4]

The scientific community reacted with immediate fervor. By early 1896, just months after the discovery, reports began circulating about the biological effects of radiation. While some focused on the diagnostic power of the rays, others saw a potential weapon against cancer. [2] It was a time of rapid, often reckless experimentation, where the boundary between research and clinical application was incredibly thin.

# Early Discoveries

The initial excitement was not limited to Roentgen’s X-rays. In 1896, Henri Becquerel discovered radioactivity while studying uranium salts, realizing that certain materials emitted radiation naturally without external power sources. [4] Shortly after, Marie and Pierre Curie isolated polonium and radium, marking a definitive shift in physics and chemistry. [7] These radioactive elements offered a continuous, portable source of energy, unlike X-ray tubes which required bulky equipment and electricity.

Early pioneers often worked without any protective shielding, as the long-term dangers of ionizing radiation were entirely unknown. [4] These scientists frequently developed skin lesions and burns on their hands, which served as a grim but clear indicator that radiation was interacting deeply with biological cells. [2] Rather than deterring them, these observed reactions fueled the hypothesis that if radiation could destroy healthy tissue, it might also destroy malignant tumors.

# Clinical Origins

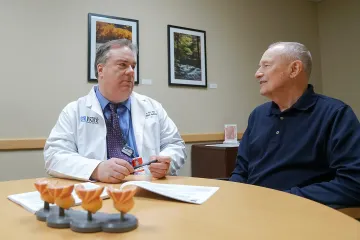

The first attempts to treat cancer using radiation began in 1896, almost simultaneously with the discovery of the technology. [6] Emil Grubbé, a medical student in Chicago, is widely credited with treating the first cancer patient with X-rays. He applied radiation to a patient with a carcinoma of the breast. [3] Similarly, in France, Victor Despeignes treated a patient with a stomach tumor using X-rays in July 1896. [4]

These treatments were rudimentary. Doctors used low-voltage equipment that could only penetrate the shallowest layers of the skin. They lacked a way to measure the dose, meaning they often applied radiation until the patient developed a severe skin reaction, known as erythema, assuming this was the signal that the cancer had received a sufficient "dose". [4] This trial-and-error approach was grueling for patients and lacked the precision associated with modern oncology.

The early 20th century saw the widespread adoption of "brachytherapy," or internal radiation, thanks to the availability of radium. [4] Small capsules of radium were implanted directly into or near tumors. This offered a more localized delivery method compared to the external beams of the time, which were often unfocused and scattered. [2] While effective for specific superficial tumors, the lack of standardized protocols meant that outcomes varied wildly between different clinics and physicians. [3]

# Treatment Evolution

One of the most significant changes in the history of the field was the introduction of fractionation. In the late 1920s, a French radiologist named Henri Coutard observed that administering a high dose of radiation in one sitting often caused unacceptable damage to healthy surrounding tissues. [4] He proposed a radical idea: divide the total treatment dose into smaller, daily fractions over several weeks.

This approach allowed healthy cells to recover between treatments, while malignant cells—which often divide more rapidly and are less efficient at repairing themselves—accumulated damage and eventually died. [4] This concept of "fractionation" is a foundational principle that remains in use today. It moved radiotherapy from a reactive, unpredictable procedure to a managed, systemic treatment strategy. [7]

# Technological Progress

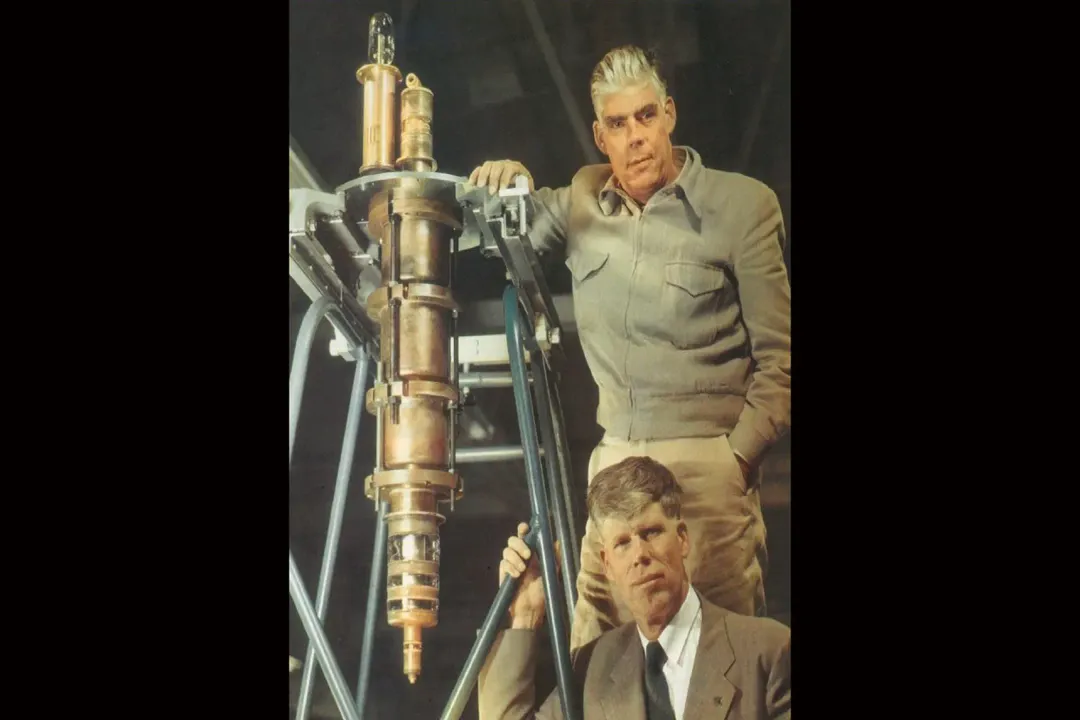

Following the success of fractionation, the field faced a mechanical barrier: energy output. Early X-ray machines, known as orthovoltage units, operated at relatively low energies. They struggled to reach deep-seated tumors without burning the skin surface. [4] Clinicians were desperate for machines that could deliver higher energy beams, which would deposit the maximum dose deeper inside the body, preserving the skin.

The mid-20th century provided the solution. In the 1950s, the introduction of Cobalt-60 teletherapy units allowed doctors to use gamma rays, which had much higher penetration power than previous X-ray devices. [4] This marked the dawn of the "megavoltage" era. [4] It allowed for the treatment of internal cancers—such as those in the prostate, lung, or brain—that were previously considered inaccessible to radiotherapy. [5]

Shortly after Cobalt units, the linear accelerator (LINAC) changed the landscape entirely. Unlike radioactive isotopes that decayed over time, LINACs generated X-rays electronically, allowing for adjustable energy and intensity. [4] These machines could be turned off when not in use, offering a significant safety advantage over radioactive sources.

# Safety Shifts

The transition from the early era of discovery to the modern era involved a massive shift in how doctors viewed patient safety. In the early 1900s, the "goal" was to see a visible burn on the skin, as it was considered the only evidence that the radiation was working. [2] Today, skin reactions are considered a side effect to be minimized, not a goal to be achieved.

The table below summarizes the core differences between the early years of radiotherapy and the modern standards.

| Feature | Early Era (1896-1930s) | Modern Era (1950s-Present) |

|---|---|---|

| Primary Goal | Visible skin reaction | Precise tumor destruction |

| Dosage | Single large dose (or guessing) | Fractionated, calculated doses |

| Energy Source | Low-voltage X-ray/Radium | LINAC/High-energy photons |

| Targeting | Visual/anatomical landmarks | CT/MRI/PET-based image guidance |

| Safety focus | Limited; high exposure risk | Strict radiation protection protocols |

This progression demonstrates that the history of radiotherapy is not just about the invention of machines; it is about the mastery of biology. Early practitioners relied on the physics of the beam, while modern practitioners rely on the biology of the tumor.

# Clinical Precision

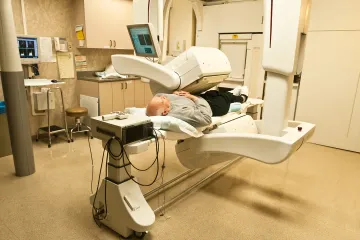

The final stage of this historical arc involves the integration of imaging technology. For most of the 20th century, radiation oncologists relied on two-dimensional X-ray films to plan treatments. They had to visualize the tumor’s position based on skeletal structures, which was imprecise. [4]

With the advent of computer tomography (CT) in the 1970s and 1980s, the field underwent another transformation. Doctors could now see the tumor in three dimensions, allowing them to shape the radiation beam to fit the exact contours of the cancer. [4] This reduced the "dose" to the healthy organs surrounding the tumor, such as the heart, lungs, or spinal cord.

Modern advances, such as Intensity-Modulated Radiation Therapy (IMRT) and Image-Guided Radiation Therapy (IGRT), represent the culmination of this timeline. These technologies allow the radiation beam to modulate its intensity while the machine moves around the patient, effectively sculpting the radiation to spare healthy tissue. [10]

# Medical Evolution

Looking back at the trajectory of radiation therapy, one cannot help but notice the resilience of the early medical pioneers. They were operating in an environment where the very tool they were using was damaging them. There is a distinct "learning curve" that defines this history: the shift from the heroic, experimental phase to the era of calculated, data-driven treatment.

In the early decades, the patients who sought treatment were often those for whom surgery had failed or was not possible. They had little to lose. This created a culture of aggressive experimentation. As time passed, the medical community established registries and professional societies, such as those that eventually formed the backbone of current oncology associations, which standardized how radiation was measured and prescribed. [3]

This standardization removed the "luck" factor. If you were a patient in 1910, your outcome depended heavily on the specific machine and the intuition of the physician. In 2026, the variation in treatment planning is significantly reduced, though not eliminated, by rigorous software and physics calculations.

# Future Outlook

The origins of radiation therapy provide a clear lesson on how innovation happens. It rarely arrives fully formed. It starts with a discovery, moves to dangerous and messy implementation, enters a phase of refinement through clinical data (like the move to fractionation), and eventually becomes integrated with other technologies like imaging and computing.

What started as an accidental observation of a glowing screen in a German lab has become one of the most effective tools in modern medicine, with radiation therapy involved in the treatment of approximately half of all cancer patients. [10] The history of the field is a testament to the persistence of those early physicians who, despite lacking the tools we have today, laid the groundwork for a discipline that focuses on precision, biology, and patient outcomes. The future of this field, while rooted in the physics of the late 19th century, is now almost entirely dependent on the digital integration of real-time patient data.

Related Questions

#Citations

An Overview on Radiotherapy: From Its History to Its Current ... - PMC

History of radiation therapy - Wikipedia

History of Radiation Oncology in the United States - The ASCO Post

A short history of Radiotherapy - Part 1: From the discovery of X-rays ...

MSK Radiation Therapy: Timeline of Progress

The First Person to Receive Radiotherapy Treatment

Pioneers of radiotherapy - Siemens Healthineers MedMuseum

Advances in Radiotherapy and Implications for the Next Century

A Brief History of Cancer | American Cancer Society

Radiation Therapy for Cancer - NCI